Abdul-Rahman al-Rawi, a student working towards his diploma in construction, stepped out of his home in a quiet street in al-Qaim, north east Iraq, having heard a volley of noise overhead.

Within seconds, the 20-year-old was dead, killed instantly by the impact of a US missile destroying the stationary car he was standing next to in the early hours of that February morning in 2024.

His brother Anmar describes in grisly detail how his brother died in the attack, telling a joint investigation by conflict monitoring group Airwars and The Independent: “It took us two days to gather all of my brother’s remains.”

Abdul-Rahman was caught up in one of 85 co-ordinated attacks carried out by the US that night against Iraqi-government aligned forces and Iranian-backed militias in Iraq and Syria.

open image in gallery

open image in galleryThe operation was deemed a success, with a senior American official subsequently boasting they had used state of the art AI technology to pinpoint precision.

But that boast soon unravelled as it emerged that up to three innocent bystanders may have been killed.

Abdul-Rahman's death was not disputed, and prompted a letter of condolence from US officials, who accepted he had been killed by mistake.

But the exact sequence of events – and the role of AI in military offensive operations including in the ongoing US and Israeli operations in Iran – has been brought sharply into focus.

The Independent and Airwars investigation suggests Abdul-Rahman is the first acknowledged civilian victim of an AI-assisted airstrike.

But when this was put to US Central Command (Centcom), it said it “does not know” whether the strike killing Abdul-Rahman had used AI-assisted targeting – a claim that one expert says raises “every red flag I can think of”.

.jpeg) open image in gallery

open image in gallery“We have no way of knowing whether this strike is one of the 85… described,” Centcom said. “No errors were found in the analysts’ vetting process or the Collateral Damage Estimate Process; CJTF-OIR followed all rules of engagement.”

Experts sounded the alarm over these remarks in interviews with The Independent.

“The implications of saying we have no way of knowing whether this strike was one of the 85 AI-enabled strikes is that they’re not keeping record of ‘targeting’ assessments,” says Jessica Dorsey, a professor of international law who specialises in AI warfare at Utrecht University.

“They’re not able to trace back the provenance of information and intelligence that fed into that being selected as a strike.”

Another expert, Dr Elke Schwarz of the London School of Economics, warns that because of the scale and speed in these AI-assisted strikes systems, “human judgement somewhat falls behind”.

How an AI-assisted attack killed a civilian

The attack on al-Qaim, a town near the Syrian border around 400km northwest of Baghdad, was in response to an attack on a US base in northern Jordan in late January 2024 which killed three troops.

The US strikes reduced several buildings in al-Qaim to rubble. Up to 15 people were wounded and between three and five medical personnel in the Popular Mobilization Forces (PMF) were also allegedly killed. Medical workers are protected under international humanitarian law.

Abdul-Rahman's death tore his family apart. “My dad remains deeply depressed,” says Anmar, a nurse in the local hospital. “My mother suffered a heart attack and high blood pressure ever since. Every time she is reminded of her baby boy she breaks down.”

Centcom said the intended targets in the attacks on 2-3 February that year, which were condemned by the Iraqi government, included command and control operations centers, rocket launchers, and supply chain facilities.

Later that month, Centcom’s then-chief technology officer, Schuyler Moore, declared in an interview with Bloomberg that the targets that night had been identified with help from machine-learning algorithms known as Project Maven.

It is believed to be the US military’s first such public declaration about the use of AI in individual strikes.

Initially developed by the Pentagon, Project Maven was adopted by the National Geospatial-Intelligence Agency (NGA), and uses computer vision algorithms to locate and identify targets from satellite imagery as well as radar to detect movement and track targets.

“We’ve been using computer vision to identify where there might be threats. We’ve certainly had more opportunities to target in the last 60 to 90 days,” said Moore. In a later interview, she told the outlet: “Maven has become exceptionally critical to our function.”

It was months later, in a civilian harm report in late 2024, that the US military would admit for the first time that it was “more likely than not” that two civilian deaths occurred as a result of the strike.

In July the following year, a Department of Defense (DoD) report confirmed an adult man had been killed in the strike, and that his family had been contacted to express condolences.

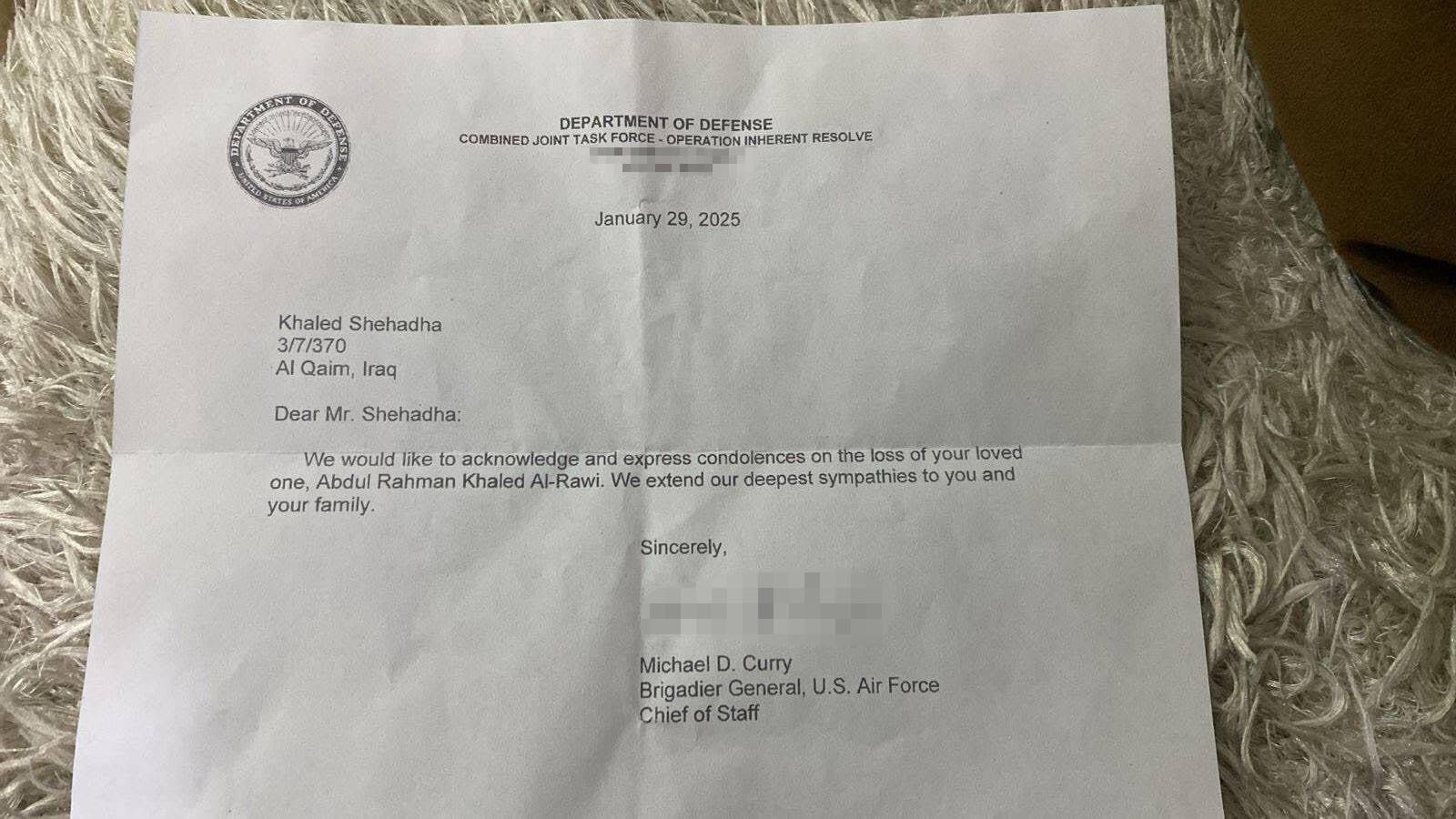

“We would like to acknowledge and express condolences on the loss of your loved one, Abdul Rahman Khaled Al-Rawi. We extend our deepest sympathies to you and your family,” reads the letter, signed by a US Air Force general, which was obtained by Airwars after tracking down Abdul-Rahman's family online.

They were left angered by the two-line letter, in which the US military declined to take explicit responsibility for the deaths, Anmar says. “We got nothing from them. Just misery.”

And it raises even more questions about Project Maven.

open image in gallery

open image in galleryProject Maven and the unnerving future of warfare

Project Maven, the flagship US project to adopt machine learning across the military which was launched in 2017, is at the heart of the concerns about AI trumping human decision-making.

Advanced militaries are increasingly seeking to integrate AI into all aspects of the so-called “kill chain” in a bid to speed up targeting and gain advantages over rivals.

Among the concerns, Project Maven in particular struggles to adapt to different terrains, and in some conditions its accuracy can drop to below 30 per cent, US officials told Bloomberg.

Another risk lies in “automation bias”, Prof Dorsey says, in which humans eventually begin trusting the computer’s output without critically assessing the target themselves.

The more that militaries rely on AI-assisted targeting, the more its personnel are offloading their own responsibilities to the machines: “We’re de-skilling ourselves. Commanders are getting less good at identifying what they are responsible to do on a battlefield.”

Dr Schwarz, who specialises in warfare technology, adds that AI-assisted targeting is “subverting the idea of what it is that fighting and killing in war has meant to date”.

“Humans have a tendency to not question decisions that are made by computational outputs.”

In the US, the use of AI by the military has been at the centre of a high-profile dispute between AI company Anthropic and the DoD.

open image in gallery

open image in galleryOn 26 February, Anthropic rejected demands that it allow use of its AI tools, such as Claude, to be used by the DoD for mass domestic surveillance and fully autonomous weapons.

On March 6, Caitlin Kalinowski, who led the robotics initiatives at OpenAI, resigned after the company agreed a deal with the DoD allowing its technology to be used in classified environments – which she warned could lead to “surveillance of Americans without judicial oversight and lethal autonomy without human authorization”.

This week, Anthropic launched legal action against the Trump administration, seeking to overturn the Pentagon's decision to label it a "supply chain risk.”

Since 28 February, AI-assisted targeting is reported to have been used widely in attacks across Iran, which rights group the Human Rights Activists News Agency (HRANA) says have killed more than 1,200 civilians, including 194 children.

The US military’s Maven Smart System (MSS) has been paired with Anthropic’s Claude AI to carry out the strikes, The Washington Post reported. MSS is a broader decision support system which integrates separate AI-assisted targeting systems such as the NGA’s Project Maven. They both been used by the US in active combat missions.

One of the critical aspects to understand about Abdul-Rahman's death is the degree to which the decision to carry out the strike was made by AI, and the level of confidence it gave to whoever carried out the strike about the legitimacy of the target.

But for Anmar, who says the family has received no form of compensation from the US military, no amount of hindsight will bring back his younger brother.